The domain

Given a time series of various metrics for various hospitals in a region, I want to find if there is any correlation between them. Specifically, I am interested in any metric for one hospital where another lags behind it by a day or so.

Initially, my results were great. In fact, they were too good to be true. See, the problem is that hospital metrics all dance to the same tune of time. When you take away the noise, there is a regular weekly rhythm to the stats.

Consequently, it was not a case of one metric predicting another. It was more that both were conforming to a weekly pattern. This was true even when we considered lags between the two series.

For instance, there may be systemic bias at play. In this case, inpatients are often eagerly discharged in time for the weekend when there are fewer staff on duty. This is true for all hospitals nationally so we should not assume somehow one hospital has some unspecified influence on another if we see them both behaving in the same manner.

In the case of a lag, Mondays see a sharp rise in patients so we should not take too seriously an (anti) correlation with the previous Friday at another hospital.

The importance of being startionary

The problem we had was that our time series was not startionary. "Making a distribution stationary is a strict requirement in time series forecasting... If a distribution is not stationary, then it becomes tough to model." [BexT, TowardsDataScience]

But what does it mean to be stationary?

Terminology

"A stationary time series is one whose properties do not depend on the time at which the series is observed. Thus, time series with trends, or with seasonality, are not stationary — the trend and seasonality will affect the value of the time series at different times. On the other hand, a white noise series is stationary — it does not matter when you observe it, it should look much the same at any point in time." [Forecasting: Princples and Practice a free, online book]

Note that just because a time series looks highly cyclical to the eye, it doesn't mean it is. If you can't predict where it will be in 10 years, it is stationary.

Example of highly cyclical, non-stationary data: annual sales that are heavily influenced by Christmas.

Example of highly cyclical, stationary data: the population of foxes and rabbits. Although cyclical, the exact length of a cycle is not known.

"The [200 day] Google stock price was non-stationary ... but the daily changes were stationary ... This shows one way to make a non-stationary time series stationary — compute the differences between consecutive observations. This is known as differencing.

"Transformations such as logarithms can help to stabilise the variance of a time series. Differencing can help stabilise the mean of a time series by removing changes in the level of a time series, and therefore eliminating (or reducing) trend and seasonality." [ibid]

The Tools

How do we know that our series is stationary? Jason Brownlee [

TowardsDataScience] suggests three approaches: look at plots, use statistical tests or compare summaries for partitions of the data. I'll look at the first two.

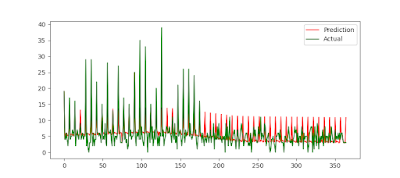

When we plot our data, it certainly doesn't look like it is stationary at first blush as there seem regular troughs and peaks:

|

| A&E admissions at two hospitals |

If we zoom in, we can see there is a definite weekly cycle:

|

| Zoomed in, plus the 1st order derivatives |

One trick to make the series stationary is to use the first order derivatives of the data instead of the actual data itself. However, this too seems to be non-stationary (see pic above).

To check more rigorously if the series is stationary, we can use the Augmented Dickey-Fuller test in

StatsModels. Here, the null hypothesis is the distribution is non-stationary, time-dependent. The p-value of us rejecting the null hypothesis was about 0.02 for the raw data and 7x10

-9 and 8x10

-21 for the first and second derivatives respectively.

But the data and its derivatives still look stationary to me (why these ADF tests reported such low p-values is still a mystery to me). Some Fourier analysis seems to suggest that the data is indeed time-dependent:

|

| Fourier analysis of the admissions data |

Look at those peaks at about 0.14 (= 1/7) on the x-axis that indicate a weekly trend.

Another way to make the data stationary is to center the data. We know how to do this with hospitals as admissions are strongly correlated with days of the week. After doing this, the Fourier analysis looks like:

|

| Fourier analysis on the same admissions centered of their mean by day-of-week |

Much better! The largest Augmented Dickey-Fuller values is 2x10

-7 and the timeseries of centered data also looks more likely to be stationary:

|

| The centered admissions data for two nearby hospitals |

Now, we can run a

Granger Causality test. Here "we reject the null hypothesis that x

2 does not Granger cause x

1 if the p-values are below a desired size of the test." [StatsModels

docs].

Conclusion

Granger Causality comes with caveats, one of which is that your data is stationary. A reason

why this is the case is that making your data stationary avoids

confounding factors that cause

spurious relationships [

Wikipedia].

To make your time series stationary may require some domain knowledge.